Canonical Expert Manuscript Blueprint and PDF-Ready Production Plan

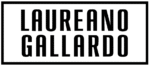

Horse Racing Analytics with R is a technically rigorous blueprint for building industrial-grade horse racing analytics systems in R. This 35-page expert manuscript treats thoroughbred flat racing as what it truly is: a multi-competitor, hierarchical, time-dependent inference and decision system.

Rather than offering handicapping heuristics or anecdotal betting advice, the book presents a fully reproducible engineering and statistical framework that spans:

-

Canonical data contracts

-

Modern modeling workflows

-

Bayesian multilevel inference

-

Ranking and survival models

-

Machine learning and deep learning

-

Proper scoring rules and calibration

-

Time-series odds modeling

-

Risk-controlled backtesting

-

Production deployment via HTTP APIs

All implemented end-to-end in R.

A Reproducibility-First Architecture

The manuscript is built around a strict reproducibility contract:

-

Quarto / bookdown → LaTeX → PDF pipeline

-

Locked R environments using

renv -

Deterministic Docker builds

-

CI rendering via GitHub Actions

Every modeling chapter runs on simulated datasets following a canonical DuckDB schema, ensuring the entire book is executable without restricted data.

When real data is desired, readers use adapter scripts to ingest lawfully obtained datasets (Kaggle competitions, exchange archives, licensed APIs). No restricted datasets are redistributed, and no scraping techniques that violate terms of service are taught.

This makes the book both scientifically rigorous and legally responsible.

Canonical Data System

At the core of the manuscript is a standardized DuckDB + Parquet data contract with three foundational tables:

-

races -

runners -

odds_snapshots

All modeling labs begin from this same schema, ensuring methodological consistency across GLMs, ranking models, Bayesian models, survival analysis, machine learning, and deployment.

DuckDB enables high-performance local analytics without requiring a server database, while Arrow handles efficient Parquet storage.

Statistical and Modeling Coverage

The book progresses methodically through modeling layers:

Baseline Probabilistic Modeling

-

Logistic regression (GLM)

-

Proper scoring rules (log loss, Brier score)

-

Calibration diagnostics

Multi-Runner Ranking Models

-

Plackett–Luce models (ties and partial rankings)

-

Bradley–Terry pairwise models

-

Identifiability and worth parameters

Hierarchical and Bayesian Models

-

GLMMs with random trainer/jockey effects (lme4)

-

Bayesian multilevel models with brms

-

Custom Stan implementations via CmdStanR

Survival and Frailty Models

-

Cox proportional hazards

-

Trainer-level frailty terms

-

Time-to-event modeling (e.g., time to first win)

Modern Machine Learning

-

XGBoost via tidymodels

-

mlr3 benchmarking workflows

-

Resampling and tuning grids

Deep Learning

-

Keras embedding models for horse/jockey/trainer IDs

-

Multi-input neural networks

-

CPU vs GPU considerations

Evaluation Before Strategy

A defining principle of the manuscript is the separation of:

Predictive skill (measured via proper scoring rules and calibration)

from

Decision performance (measured via backtesting under explicit assumptions).

The wagering layer includes:

-

Market probability normalization

-

Fractional Kelly sizing

-

Risk caps and drawdown controls

-

Explicit guardrails and stress testing

No approach is presented as guaranteed profitable.

Production Deployment

The book concludes by operationalizing models:

-

Versioning with

pins -

Model packaging with

vetiver -

HTTP APIs via

plumber -

Deployment-ready artifacts

Readers leave not only with statistical models but with deployable infrastructure.

Audience

This manuscript is written for:

-

Data scientists and ML engineers seeking reproducible modeling pipelines

-

Statisticians interested in hierarchical, ranking, and Bayesian methods

-

Quantitative bettors who demand calibrated probabilities and disciplined risk management

-

Researchers requiring citeable, legally compliant workflows

What Makes This Book Distinct

-

It models racing as a structured probabilistic system—not a binary gamble.

-

It enforces strict legal boundaries around data rights.

-

It integrates statistics, machine learning, engineering, and deployment in one coherent framework.

-

It is compact yet technically dense, designed as an expert blueprint rather than a beginner tutorial.

Learning Outcomes

By the end of the manuscript, readers will be able to:

-

Build a fully reproducible R analytics project

-

Design and query a canonical racing warehouse

-

Fit GLM, GLMM, ranking, Bayesian, survival, ML, and neural models

-

Evaluate probabilistic forecasts properly

-

Conduct disciplined, risk-aware backtests

-

Deploy a versioned predictive model as an API

Horse Racing Analytics with R is not a gambling book.

It is a systems-level blueprint for reproducible, legally compliant, statistically principled racing analytics—from raw data contract to deployed model.

Reviews

There are no reviews yet.